A Windows background tool that turns voice into typed text. Hold a hotkey, say something, release. The words appear at wherever your cursor is. No app switching, no clicks, no cloud.

The Problem

Windows dictation is broken for power users. Windows Voice Typing requires manual activation for every session and doesn’t work universally across apps. Dragon NaturallySpeaking costs hundreds per year and sends your audio to the cloud. Other Whisper-based desktop tools still require interaction: open a window, click record, click stop, copy the text, switch back, paste. Five steps for something that should be zero.

The goal was zero steps: hold, speak, release, text appears.

How It Works

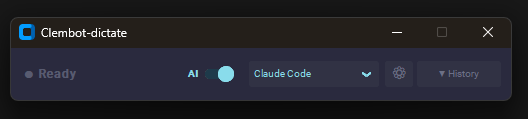

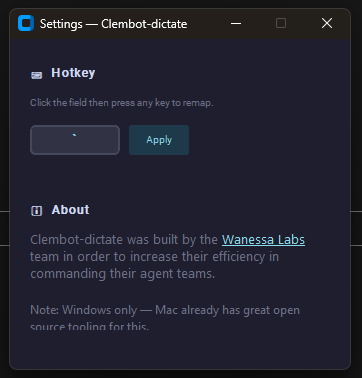

Clembot-dictate runs silently as a background process with a 52px header strip at the top of the screen. When you hold the configured hotkey (default: backtick), it starts capturing mic audio into an in-memory numpy buffer. On release, it passes the buffer directly to faster-whisper for local transcription, then routes the output through an optional AI refinement pass before pasting to the active window via pyperclip and a synthesized Ctrl+V.

The entire pipeline runs off the main thread. The hotkey listener never blocks.

hold hotkey → window_detector reads active app → Recorder captures audio

→ key release → Transcriber (faster-whisper, tiny model, CPU)

→ Refiner (Ollama local or Claude Haiku) → Paster (pyperclip → Ctrl+V)

→ text at cursorEnd-to-end latency target: under 3 seconds on CPU with the tiny model.

AI Refinement

Raw speech is messy. The optional refinement pass shapes the transcript based on context mode:

- Claude Code mode: Converts rambling speech into clean, precise developer commands. Strips filler words, preserves technical terms, outputs as an imperative instruction.

- Work writing mode: Polishes speech into professional written communication, matching register to the context.

Modes are defined in config.py. Add your own by editing a single dict.

Auto-Context Detection

At the moment you press the hotkey, before recording starts, Clembot-dictate reads which process is in the foreground using win32gui + psutil and switches to the matching context mode automatically. Switch from VS Code to Outlook: the mode switches without touching the UI.

Dual Backend

- Local (default): Ollama + Gemma 3 4B — runs on your machine, free, no API key required. Model is warmed at startup to eliminate cold-start latency.

- Cloud: Claude Haiku — higher-quality refinement for approximately $0.001 per dictation. One config change to switch.

History Panel

Every dictation stores both the raw transcript and the AI-refined version in a scrollable history panel. Re-run AI refinement with a different mode, copy any entry to clipboard, or switch backends mid-session. The panel is hidden by default and expands from the header strip on demand.

Stack

| Component | Role |

|---|---|

sounddevice | Streams float32 audio from mic into numpy buffer while hotkey is held |

faster-whisper | CTranslate2-based Whisper, 4x faster than openai-whisper on CPU. Tiny model, int8 quantized. Accepts numpy array directly, no WAV file I/O |

keyboard | Global hotkey listener, suppress=True prevents raw keystroke from typing. Works system-wide without admin rights |

pyperclip + pyautogui | Saves previous clipboard, writes transcript, fires Ctrl+V, restores clipboard |

win32gui + psutil | Reads active window process name for auto-context switching |

customtkinter | Dark-themed UI (Catppuccin Mocha) — 52px header strip, expandable history panel |

pystray | System tray icon, turns red while recording |

ollama | Local LLM client, Gemma 3 4B default |

anthropic | Claude API client for cloud refinement, Haiku by default |

Runtime: Python 3.10+, Windows 10/11. No installer, no admin rights required for most configurations.